Artificial vs. human intelligence: Canacademia safely and effectively use AI?

Over the course of the past year, MC has adopted approaches toward artificial intelligence, aiming for students to utilize it as a tool that will not take away from student learning.

At the end of the 2024-2025 academic year, MC updated its policies on AI to reflect that. The summary of the policy guidelines says, “Maryville College supports the ethical and responsible use of generative AI tools to enhance teaching, learning, research and operations. This policy outlines expectations for transparency, integrity and data privacy, while encouraging innovation and critical engagement with AI technologies.”

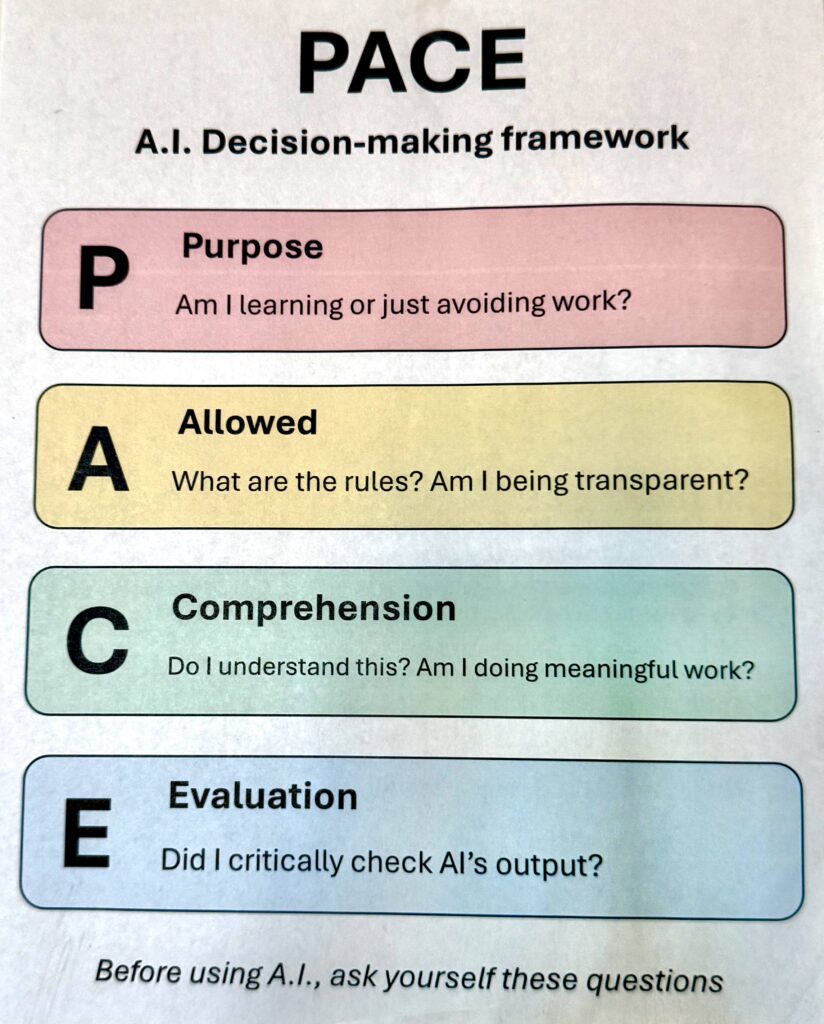

MC adopted the motto, “Be a human,” for its approach to AI. All over campus, students and faculty can see the PACE framework, which stands for: Purpose, Allowed, Comprehension and Evaluation. This framework is put into place for those who use AI to assist them in coursework, encouraging them to think about how they are using it. It can allow people to ensure they are using AI ethically and not taking away from learning.

Faculty and students on campus have diverse opinions surrounding the policy changes that are allowing and encouraging the use of ethical AI.

Jan Taylor, senior lecturer in composition, said that she thinks AI is interesting, but that there should be policies and rules governing its use in an academic setting.

“I favor the approach of helping students understand AI’s limitations and where [AI] might be useful,” said Taylor. “In an educational setting, some uses of AI can strip learning from assignments. That means wasted tuition dollars and wasted time in a rich college setting that has the potential to lay the foundation for a meaningful life and successful career. The PACE framework was created to address these concerns.”

Professor of History Dr. Aaron Astor said that AI can be beneficial for research, but that AI can have negative consequences when it is used as a substitute for learning.

“As a liberal arts college, our goal is to teach students how to think critically,” said Astor. “In the Humanities, that means the entire writing process [such as] brainstorming, outlining, researching, drafting, revising […] are all essential to critical thinking.”

According to Astor, the outcomes of AI are mostly negative, but it is now a heavy part of reality, so we should all learn how to use AI productively.

Associate Professor of Sociology Andrew Gunnoe discussed how he encourages his senior students to use AI to develop research questions and help them with the organization of their senior thesis.

“I have mixed feelings about how AI can effectively be implemented into coursework,” Gunnoe said. “[But] I do think AI can be a useful tool for helping students construct research questions, search for information, and refine their own knowledge about specific subjects.”

Gunnoe also noted the impact that artificial intelligence will have on the future. “I understand the emphasis we are placing on how AI is changing the classroom experience, but I think we also need to spend time thinking about the broader social and environmental impacts [this technology has] on our society moving forward,” Gunnoe said. “A willful resignation to the adoption of this technology as it is, under its current power structure and with its current trajectory, is to abandon much of the promise that this technology holds for the future.”

Rachel Littrell, an adjunct instructor in English at MC, said that she believes that AI has the potential to be used ethically in a school setting.

“‘Assist’ is a keyword [when it comes to using AI],” said Littrell. “AI should never be used in place of authentic, individual creativity and thought.”

According to Littrell, students should only be utilizing AI to engage with their education and coursework. “I believe that AI can positively affect the learning process if the individual student already has a strong foundational understanding of the assignment or the material,” Littrell said. “As an example, having AI proofread an essay for grammatical errors is only beneficial to the student if that student [already has an understanding of the grammar used.] Otherwise, the grade itself may have been positively impacted in the end due to the absence of errors, [but] the reason for the grade in the first place– to indicate where and how learning and improvement can take place–is null, [because] the errors will continue to occur.”

Many MC students also hold opinions regarding the use and incorporation of AI on campus. Je’kobia Baldwin (‘29), said that she thinks many professors are promoting AI far too much.

“It’s getting to a point where it seems like we as students are being told to [turn to] AI if we ever need help. But that help is really just a cheat sheet that doesn’t really give much accurate information,” said Baldwin.

Baldwin said that while she has used AI to assist her in coursework, she does not use it often and she only ever uses AI when she has no idea of what to write about or what questions to ask.

Charlotte Bollschweiler (‘28), an art student at MC, also said that she has mixed feelings about the use of AI.

“While I think there’s no way to go around it – it’s just become an inevitable part of life at this point – I still get taken aback when a professor mentions using it for an assignment in some way,” said Bollschweiler. “My thinking is, I’m paying to be here at this school. Why should I let a robot do the learning and growing for me?”

Katie Hale (‘27), another art student at MC, noted that she believes many of her professors have had good approaches to the use of AI in an academic context.

“I think, in the classroom, learning how to appropriately use AI is going to become a necessity, considering how much AI is already becoming part of the workforce,” said Hale.

Hale believes that using AI minimally and as a starting point can be beneficial; however, it can take away from learning if someone is too reliant on AI.

“AI really stunts the joy of learning and figuring out how to do things for yourself,” said Hale. “When it comes to final work, I don’t think students should be using AI.”

Austin McKee (‘26), a Sociology and Writing/Communications student, said it is dangerous to continue implementing AI into coursework and that, with the social and environmental impacts, AI should not continue to be used.

“While higher academia seems to understand the negative impact of AI, both physically and socially, it still feeds into it,” said McKee. “It’s disheartening to see people you respect and admire through an academic lens align themselves with something that you know is detrimental to many.”

McKee said that when one uses AI, even merely as a tool, they do not engage and truly understand topics as well as they can by learning traditionally. He also mentioned the declining literacy rate in the U.S., suggesting that now, more than ever, it is important that people focus on the authentic, human aspect of creativity and prosperity.

“If you were to get a degree and use AI for the majority of your coursework, did you really learn anything? I don’t see the point in wasting any time at an institution for years and years if you would rather take the easy way out of your education through AI, than dare to be challenged and grow,” McKee said.

Reese Brackins (‘28), a math student, said that she often uses AI to help her study by creating study guides that she can use to do the studying herself.

Brackins warned that AI can be inaccurate on some things, especially math. She said that, because there are many ways one can solve a problem, AI programs may take incorrect steps in solving math problems. Brackins also mentioned that AI programs can simply get the answer wrong, leaving students without the help they need to solve a problem on their own.

“AI doesn’t have the human capability of problem-solving, so it doesn’t know how to break it down,” said Brackins.

Posting of the PACE framework (Purpose, Allowed, Comprehension, Evaluation) on MC campus in Anderson Hall. Photo Courtesy of Carrie Jones

I love this article! It gives a handful of thoughtful perspective on the use of AI in an academic setting. Good job Carrie!!